In One Line: RAG is when an AI looks up the right notes before it answers instead of bluffing from memory.

AI chatbots can sound impressively sure of themselves. That is part of the problem. They can also be wrong in a smooth, confident, almost theatrical way. RAG is one of the main tricks used to fix that.

:contentReference[oaicite:2]{index=2}RAG stands for retrieval-augmented generation. The name sounds like it was built in a lab by people who fear sunlight. The idea is much simpler: before the AI answers, it goes and retrieves useful information from a trusted source, then uses that material to build the reply.

:contentReference[oaicite:3]{index=3}So instead of relying only on what it learned during training, the model gets fresh context at the moment you ask the question. That context might come from company docs, PDFs, help articles, a product database, research files, or other approved sources.

:contentReference[oaicite:4]{index=4}What RAG Means In Plain English

Imagine two assistants.

The first one answers from memory. Sometimes that is enough. Sometimes it is not. If you ask about your brand guide from last week, your latest pricing sheet, or a niche topic buried in a 60-page PDF, that assistant may guess.

The second assistant quickly checks the right folder, pulls the most relevant notes, and then answers using those notes. That second assistant is much closer to how RAG works.

:contentReference[oaicite:6]{index=6}It is not magic. It is closer to “smart open-book answering.” Which, to be fair, is still pretty useful.

A Simple Example

Say you run a small online store. You upload your shipping policy, return rules, product catalog, and support scripts into a system.

Then a customer asks, “Can I return a sale item from Taiwan if the box is open?”

A normal chatbot might answer from general internet-style knowledge. A RAG system first searches your actual store documents, finds the return policy section, pulls the relevant chunk, and uses that to answer. That makes the reply more specific, more current, and easier to trace back to a source.

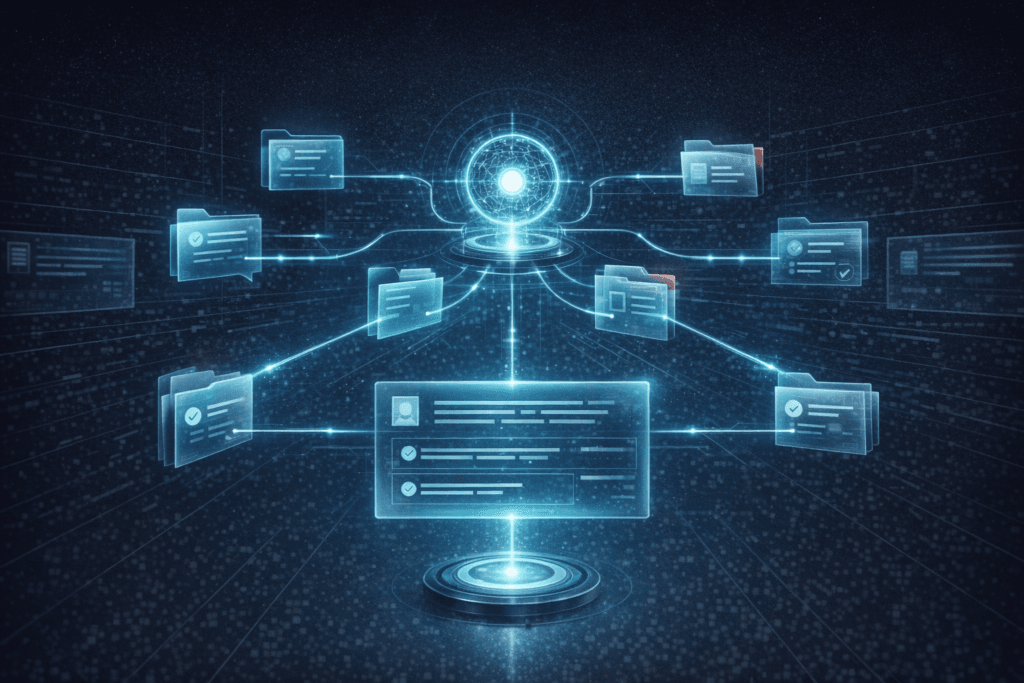

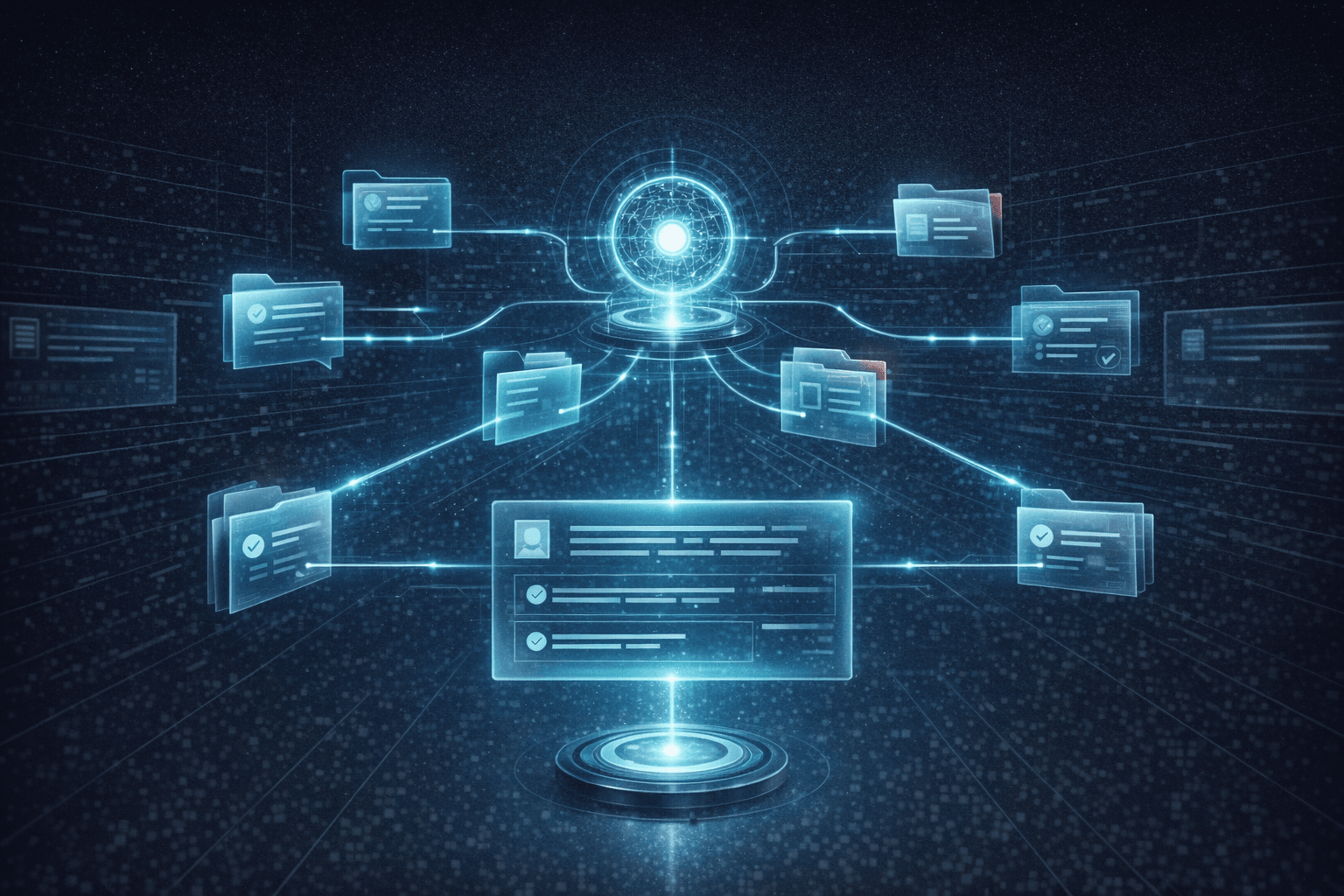

:contentReference[oaicite:7]{index=7}How RAG Works

Under the hood, RAG usually works in a few steps.

- Your documents get prepared and broken into smaller chunks.

- Those chunks are turned into embeddings, which are number patterns that help the system find meaning, not just exact words.

- When a user asks something, the system searches for the most relevant chunks.

- The best chunks are passed into the model as context.

- The model writes an answer using both the question and the retrieved material.

That chunking part matters more than people think. If the source material is split badly, retrieved badly, or ranked badly, the answer can still go sideways. RAG is only as helpful as the information it finds and the way it finds it.

:contentReference[oaicite:9]{index=9}| Without RAG | With RAG |

|---|---|

| Answers from training and memory | Answers with retrieved source material |

| May be outdated | Can use newer documents |

| Harder to trace | Often easier to cite or verify |

| More likely to generalize | More likely to stay on your topic |

Why People Use RAG

The big reason is simple: most AI models are not born knowing your stuff. They know patterns from training data, but they do not automatically know your newest docs, your internal rules, or your own pile of strange little business details.

:contentReference[oaicite:11]{index=11}RAG helps because it can bring in outside knowledge at answer time. That means better odds of fresh information, domain-specific answers, lower retraining cost, and more control over what the model uses. It can also support source-backed replies, which helps with trust.

:contentReference[oaicite:12]{index=12}For creators, that matters in very ordinary ways. Think content libraries, brand guidelines, interview transcripts, course notes, product documentation, client briefs, or a messy archive of research PDFs that nobody wants to read twice.

:contentReference[oaicite:13]{index=13}What RAG Looks Like For Creators

RAG is not just for giant companies with too many dashboards. Creators can use it too.

- A writer can ask a tool to search past interviews and pull quotes before drafting.

- A marketer can build a chatbot on product pages, positioning docs, and customer FAQs.

- A course creator can let students ask questions across lessons, worksheets, and transcripts.

- A small team can query a pile of SOPs, proposals, and meeting notes without playing digital archaeology.

In all of those cases, the model is not becoming smarter in some permanent, mystical sense. It is being given better notes at the right moment.

:contentReference[oaicite:15]{index=15}What RAG Is Not

RAG is not the same as training a model from scratch.

It is not the same as fine-tuning either. Fine-tuning changes the model itself by training it further. RAG usually leaves the model alone and changes the context it sees before answering.

:contentReference[oaicite:16]{index=16}That is why RAG is often the faster first move when your problem is “the AI needs access to my documents.” If your information changes often, RAG is usually more practical than retraining every time a policy, catalog, or file changes.

:contentReference[oaicite:17]{index=17}But RAG is not a cure-all. If you want a model to learn a new style, new tone, or a very specific repeated behavior, fine-tuning may still matter. In many real systems, teams use both.

:contentReference[oaicite:18]{index=18}When RAG Works Best

RAG shines when the answer lives somewhere outside the model and can be retrieved. That includes documentation, policies, searchable knowledge bases, product specs, research files, and support content.

:contentReference[oaicite:19]{index=19}It also works well when information changes often. Instead of baking new facts into a model, you update the source material and let retrieval do the work. That is one reason RAG became so popular so fast.

:contentReference[oaicite:20]{index=20}Where RAG Still Falls Short

RAG can still fail in boring, very human ways.

- The system may retrieve the wrong chunk.

- The right chunk may exist, but rank too low.

- The source docs may be messy, outdated, or contradictory.

- The answer may overstate what the source actually says.

- Security rules may be handled poorly if the setup is sloppy.

There is another limit worth knowing: RAG is great for finding relevant pieces, but it is not always the best tool for handling whole-document tasks. AWS notes that RAG does not work especially well when you want to summarize entire documents. That is a useful reality check, because AI marketing pages do enjoy acting like one hammer can fix every wall in the house.

:contentReference[oaicite:22]{index=22}How To Tell If A Tool Uses RAG

Most products will not proudly wave a little flag that says “hello, I am using retrieval-augmented generation.” But there are clues.

- You can connect your own docs, PDFs, help center, or database.

- The tool cites sources or links back to documents.

- Answers improve when your source files are updated.

- The product talks about grounding, knowledge bases, search, indexing, or retrieval.

If the tool says it can answer from your content without retraining the model, there is a good chance some form of RAG is involved.

:contentReference[oaicite:24]{index=24}The Simple Way To Remember It

Here is the easiest mental model:

:contentReference[oaicite:25]{index=25}RAG is not teaching the AI new facts forever. It is handing the AI the right reference pages right before the test.

That is why RAG matters to non-technical creators. You do not need to understand every vector, pipeline, or ranking trick to understand the value. If an AI can search the right materials before it speaks, you have a better shot at answers that are useful instead of merely fluent.

:contentReference[oaicite:26]{index=26}And in AI, that difference is not small. It is the difference between a confident guess and a grounded answer. One sounds smart. The other is actually helpful.