AI writing tools are full of terms that sound obvious until you try to use them and realize half the interface seems written for people who already live inside prompt boxes.

That is the real problem. Most beginners are not stuck because the tools are too advanced. They are stuck because the language around the tools is weirdly unclear, casually technical, and often explained by people who forget what it is like to be new.

So here is Beginner Terms in AI Writing Tools, Explained Simply without the robot fog. You will get plain-English definitions, what each term actually means in practice, and where people tend to get confused. If you have ever nodded along to words like “prompt,” “context window,” or “fine-tuning” while quietly thinking, “Sure, absolutely, no idea,” this should help.

And no, you do not need to memorize every piece of AI jargon to use these tools well. You just need to understand the handful of terms that affect how you write, edit, and get better output.

Want the broader roadmap? Start with the parent guide.

Why AI writing terms feel more confusing than they should

A lot of AI terms are borrowed from technical fields, then tossed into marketing pages as if everybody already knows what they mean. That is why simple tasks like “write me a better LinkedIn post” suddenly come with language about models, tokens, temperature, memory, and generations.

The annoying part is that some of these terms matter a lot, and some barely matter unless you are doing more advanced work. Beginners usually get handed all of them at once, which is a great way to make a useful tool feel harder than it is.

The better approach is simpler: learn the core terms that affect output quality, speed, and control. Ignore the rest until you actually need them.

The core AI writing terms beginners should actually know

These are the terms you will see most often in AI writing tools, tutorials, and settings.

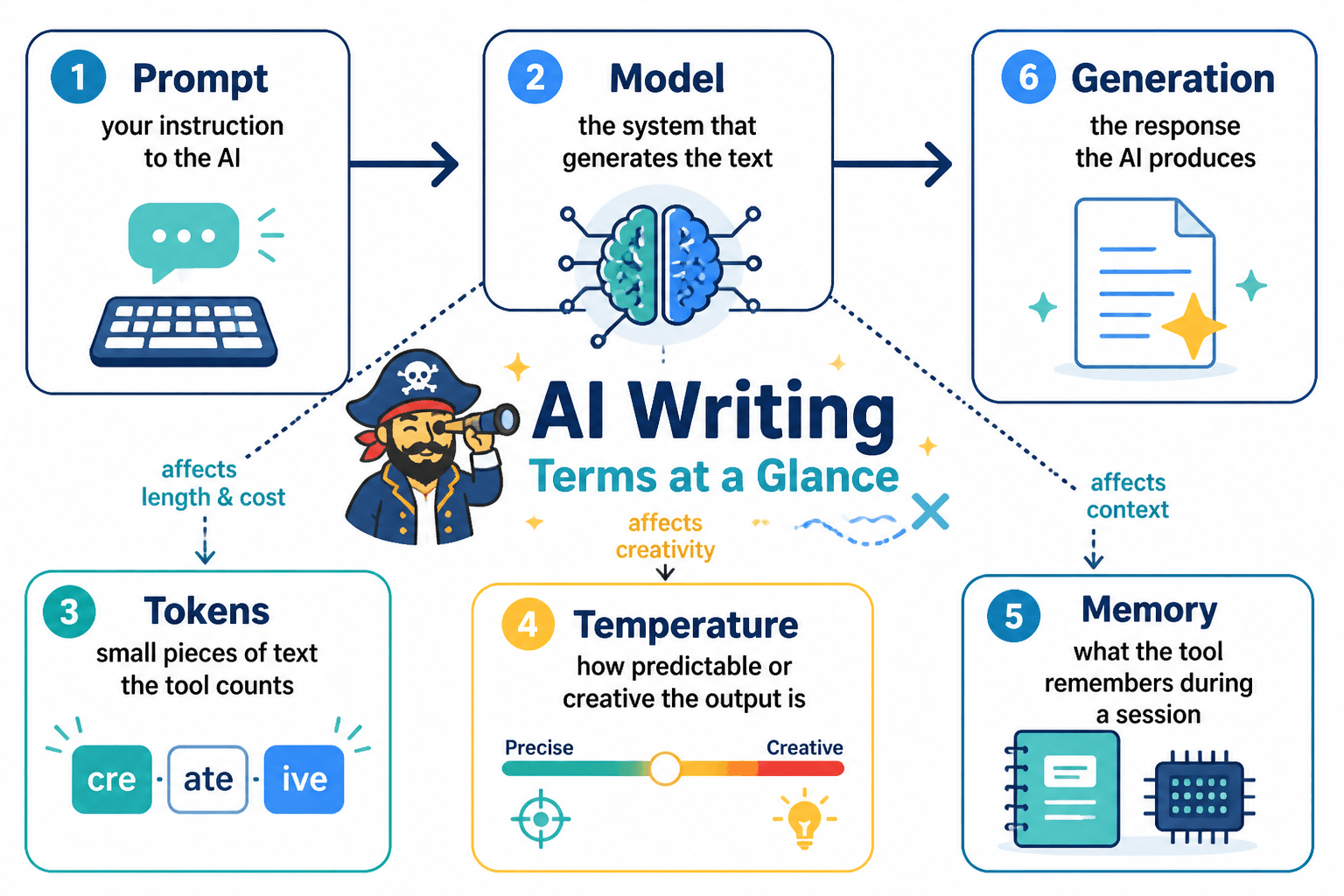

Prompt

A prompt is the instruction you give the AI.

That can be one sentence, a paragraph, a list of rules, or a full brief. If you type “Write an Instagram caption for a life coach,” that is a prompt. If you type three paragraphs explaining your audience, tone, goal, and CTA, that is also a prompt. Just better.

Beginners often think prompts need to sound technical. They do not. Clear beats fancy. Every time.

Simple rule: if a decent human writer would understand your instructions, the AI probably has a fighting chance too.

Output

The output is what the AI gives back.

If your prompt is the input, the response is the output. Pretty simple. But this matters because a lot of frustration with AI writing tools is really output quality frustration. People blame the tool when the issue is often the prompt, the context, or the expectations.

Model

A model is the AI system doing the writing.

Different tools may use different models, and some tools let you choose between them. One model might be faster. Another might be better at reasoning. Another might be better at long-form writing. Think of the model as the engine under the hood, not the app dashboard you click around in.

This is one of those terms that sounds intimidating but is mostly just useful for comparison. If two tools look similar but produce different quality, the model is often part of the reason.

Context

Context is the background information the AI uses to generate a response.

This could include:

- Your earlier messages

- A brand voice guide

- Examples you pasted in

- A document you uploaded

- Instructions you added before the main task

When people say, “Why does the AI keep giving me generic fluff?” the answer is often because it has almost no useful context. You asked for good writing while giving it crumbs.

Context window

The context window is how much information the AI can consider at once.

If that sounds abstract, think of it like working memory. A bigger context window means the tool can handle more text, instructions, examples, or conversation history in one go without losing track.

For writers, this matters when you want the tool to review a long article, keep track of a full brand guide, or rewrite several sections while staying consistent. If the context window is too small, things can get messy fast.

Token

A token is a small chunk of text the AI processes.

Not exactly a word, but close enough for beginner purposes. A short word may be one token. A longer word might be split into more. Spaces and punctuation can count too, because apparently language needed one more way to be irritating.

You will see tokens mentioned in pricing, limits, and context windows. In practical terms, more tokens usually means the tool can process more input and generate more output before hitting limits.

Temperature

Temperature controls how predictable or creative the output is.

Lower temperature usually means more stable, direct, and consistent responses. Higher temperature usually means more variety, more surprise, and sometimes more nonsense. Great for brainstorming. Less great when you need clean, reliable copy that does not wander off and invent a personality disorder for your brand.

If your tool gives you a temperature setting, here is the easy version:

- Low: better for summaries, straightforward copy, structured writing

- Medium: useful for balanced marketing content and ideation

- High: better for brainstorming, variation, creative angles, rough idea generation

System prompt

A system prompt is a higher-level instruction that tells the AI how to behave.

You will not always see this directly, but many AI writing tools use hidden system prompts in the background. These shape tone, role, boundaries, or task behavior.

For example, a writing assistant might be told to act as a helpful editor, stay concise, avoid certain claims, or follow a brand voice. If the output feels strangely consistent or boxed in, a system prompt may be part of the reason.

Template

A template is a pre-built prompt or workflow for a specific task.

Examples:

- Blog intro generator

- Product description template

- Email subject line generator

- LinkedIn post framework

Templates can be helpful, especially when you are starting. But they are also where a lot of stale, samey content begins. If everybody uses the same template with the same lazy input, the output starts smelling like AI from across the room.

Workflow

A workflow is the repeatable process you use with the tool.

For example:

- Brainstorm ideas

- Pick one

- Generate three hooks

- Draft the post

- Edit for voice

- Write CTA

This term matters because good AI use is rarely one magic prompt. It is usually a sequence. People who get decent results tend to build simple workflows instead of asking the tool to do everything perfectly in one shot.

Fine-tuning

Fine-tuning means training or adjusting a model further for a specific use case.

For most beginners, this is not something you need right now. It is more advanced and often used by teams, products, or specialized setups that need very specific behavior.

Important distinction: giving the AI examples in a prompt is not the same as fine-tuning. A lot of people blur those together. One is instruction. The other is deeper model customization.

Training data

Training data is the information the model learned from when it was built.

You do not usually control this in normal writing tools, but it helps explain why AI can produce fluent writing while still getting facts wrong, sounding generic, or missing nuance. It has patterns. It does not have taste, judgment, or a lived understanding of your business.

Hallucination

A hallucination is when the AI confidently makes something up.

This could be a fake statistic, invented quote, made-up feature, imaginary case study, or suspiciously neat summary of something that does not exist. The tone is often calm and polished, which is exactly why beginners trust it too quickly.

If the content includes facts, examples, names, claims, or references, check them. AI does not get a gold star for sounding sure of itself.

Iteration

Iteration means improving the result in rounds.

You ask for a draft. Then you refine it. Then you sharpen the tone. Then you shorten the intro. Then you rewrite the CTA. That is iteration.

This is how good users work. Not by expecting one perfect answer, but by directing the tool toward something better. AI writing tools are much more useful when treated like rough-draft assistants and much less useful when treated like tiny all-knowing copy chiefs.

Terms beginners often confuse

Some terms get mixed together constantly, so here is the cleaner version.

| Term | What it means | What it is not |

|---|---|---|

| Prompt | Your instruction to the AI | The same thing as the AI’s response |

| Model | The underlying AI engine | The app interface itself |

| Context | Background info used in the response | Only chat history |

| Template | A reusable prompt structure | A guarantee of good writing |

| Fine-tuning | Deeper model customization | Pasting examples into a prompt |

| Hallucination | Made-up content presented as fact | A creative flourish you asked for |

Once those basic terms are clear, most AI writing tools stop feeling mystical and start feeling easier to evaluate. That alone makes it much easier to choose tools, write better prompts, and avoid sounding confused when you talk about them.